The language of master data management (MDM) is packed with definitions, acronyms and abbreviations used by analysts, data scientists, CDOs and IT managers. This dictionary offers simple explanations of the most common MDM terms and helps you navigate the MDM landscape.

ADM - Application Data Management

ADM is the management and governance of the application data required to operate a specific business application, such as your ERP, CRM or supply chain management (SCM). Your ERP system’s application data is data associated with products and customers. This may be shared with your CRM, but in general, application data is shared with only few other applications and not widely across the enterprise.

ADM performs a similar role to MDM, but on a much smaller scale as it only enables the management of data used by a single application. In most cases, the ADM governance capabilities are incorporated into the applications, but there are specific ADM vendors that provide a full set of capabilities.

See also: Master Data

More information:

AI - Artificial Intelligence

In the MDM universe, AI is relevant in two ways. First, AI is an integral part of the MDM that uses AI and the underlying machine learning technology to classify data objects, such as products. AI searches for certain identifiers to find patterns in fragments of information, e.g., a few attributes or a picture of a product and makes sure the product is classified correctly in the product taxonomy. This capability is used in product onboarding. If the object does not match the classification criteria it can be sent to a clerical review which will help train the AI. Second, AI also relies on MDM when used in external applications. AI needs to know which identifiers to search for. These identifiers are described and governed in the MDM data model. MDM ensures the results of AI processing are trustworthy.

See also: Generative AI, Machine Learning, Auto-Classification

More information:

- Generative AI for Product Information Needs Governed Data

- Advance Your AI Agenda with Master Data Management Extract value from AI and machine learning faster with the power of MDM

API - Application Programming Interface

An API is an integrated code of most applications and operating systems that allows one piece of software to interact with other types of software. In master data management, not all functions can necessarily be handled in the MDM platform itself. For instance, you want to be able to deliver product data from your MDM to the ecommerce system or validate customer data using a third-party service. The API makes sure your request is delivered and returns the response.

The use of APIs is a core capability of the MDM enterprise platform. As the single source of truth, the MDM needs to connect with a wide range of business systems across the enterprise.

See also: Connector, DaaS, Data Integration

More information:

Architecture

An MDM solution is not just something you buy, then start to use. It needs to be fitted into your specific enterprise setup and integrated with the overall enterprise architecture and infrastructure, which is why MDM architecture is required as one of the first steps in an MDM process. Your MDM strategy should include a map of your data flows and processes, which systems are delivering source data, and which rely on receiving accurate data to operate. This map will show where the MDM fits into the enterprise architecture.

See also: Implementation Styles

More information:

- Stibo Systems Platform

- How to Build Your Master Data Management Strategy in 7 Steps

- MDM Academy course: MDM Solution Architecture

Asset Data

Enterprise assets can be physical (equipment, buildings, vehicles, infrastructure) and non-physical (data, images, intellectual property). In any case, it’s a subject that is owned by a company. Assets have data, such as specifications, location, bill-of-materials and unique ID. This data can be leveraged in different ways and needs to be managed, for instance for preventative maintenance. An MDM system can help to describe how assets relate functionally, perform and configure, essentially holding the digital twin of an asset. Having reliable information on your assets, can answer important questions like “What is the current condition of our assets that are supporting this business process? Who is using them? Where are they located?” Please note this important distinction: Asset data is your assets’ master data whereas data that is generated by your assets is called sensor data, time series data or IoT data. Master data has the quality of being low-volatile whereas IoT data is constantly changing and increasing. Asset data represents what you know with certainty about your assets.

See also: DAM, Digital Twin

More information:

Attributes

In MDM, an attribute is a specification or characteristic that helps define an entity. For instance, a product can have several attributes, such as color, material, size, ingredients or components. Typical customer attributes are name, address, email and phone number. MDM supports the management and governance of attributes to provide accurate product descriptions, identify customers and create golden records. Having accurate and rich attributes is hugely important for the customer experience as well as for the data exchange with partners and authorities.

See also: Data Entity, Data Object, Data Governance, Golden Record

More information:

Augmented MDM

Augmented master data management applies machine learning (ML) and artificial intelligence (AI) to the management of master data. Leveraging AI techniques can enhance data management capabilities to complement human intelligence to optimize learning and decision making. Augmented MDM enables companies to refine master data to optimize their business operations to run more efficiently and transform their businesses to drive growth.

See also: AI

More information:

Auto-Classification

Auto-classification, computer-assisted classification or machine-supervised classification, is a method of automatically categorizing items into predefined categories, classes and industry standards using machine learning algorithms. It can be used as a part of a product information management (PIM) system to automatically categorize and organize product information into different product categories and attributes, for example, when products are onboarded with a minimal set of data, such as brand name and item number. Auto-classification, and computer assisted classification, speed up supplier item onboarding and leads to an up-front more correct classification of items, leading to better data quality through a correct use of templates of the item records. Predictions for classifications are associated with a confidence range and if the confidence of the prediction falls below a set threshold, the product is sent to clerical review for manual classification, which in turn can be used to re-train the classification algorithm.

More information:

BI - Business Intelligence

Business intelligence is a type of analytics. It analyzes the data that is generated and used by a company in order to find opportunities for optimization and cost cutting. It entails strategies and technologies that help organizations understand their operations, customers, financials, product performance and many other key business measurements. Master data management supports BI efforts by feeding the BI solution with clean and accurate master data.

See also: Data Analytics, Data Governance

Big Data

Big data is characterized by the three Vs: Volume (a lot of data), Velocity (data created with high speed) and Variety (data comes in many forms and ranges). Big data does not only exist on the internet but within single companies and organizations who need to capitalize on that data.

Finding patterns and inferring correlations in large data sets often constitutes goals of big data projects. The purpose of using big data technologies is to capture the data and turn it into actionable insights. The information gathered from big data analytics can be linked to your master data and thereby provide new levels of insights.

See also: Data Analytics

More information:

BOM - Bill of Materials

In manufacturing, a bill of materials (BOM) is a list of the parts or components that are required to build a product. A BOM is similar to a formula or recipe, but whereas a recipe contains both the ingredients and the manufacturing process, the BOM only contains the needed materials and items. Manufacturers rely on different digital BOM management solutions to keep track of part numbers, unit of measure, cost and descriptions.

See also: Attributes

Business Rules

Business rules are the conditions or actions that modify data and data processes according to your data policies. You can define numerous business rules in your MDM to determine how your data is organized, categorized, enriched, managed and approved. Business rules are typically used in workflows, e.g., for the validation of data in connection with import or publishing. As such, business rules are a vital tool for your data governance and for the execution of your data strategy as they ensure the data quality and the outcome you want to achieve.

See also: Workflow

More information:

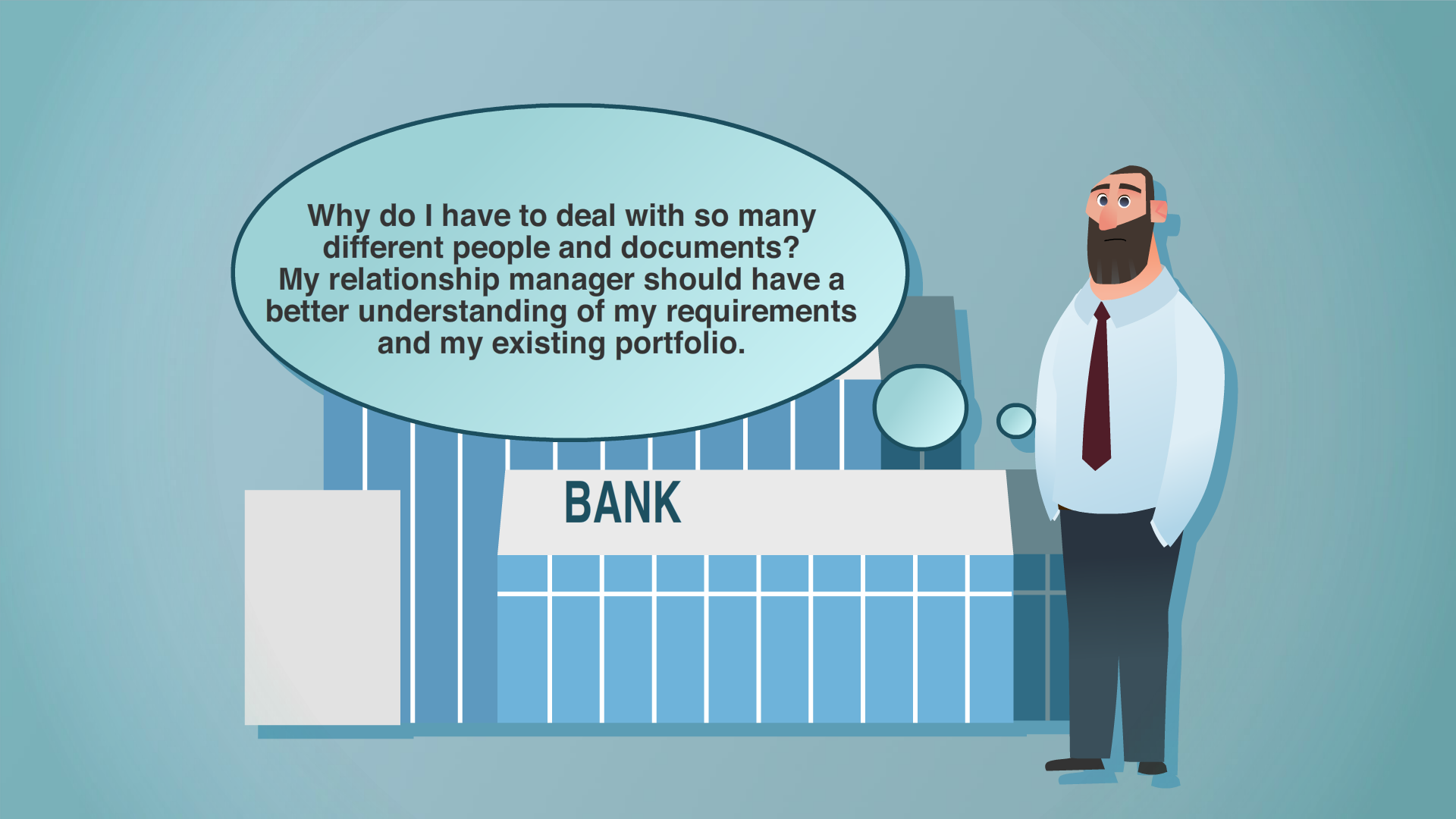

CDI - Customer Data Integration

CDI is the process of combining customer information acquired from internal and external sources to generate a consolidated customer view, also known as the golden record or a customer 360° view. Integration of customer data is the main purpose of a Customer MDM solution that acquires and manages customer data in order to share it with all business systems that need accurate customer data, such as the CRM or CDP.

See also: Customer MDM

More information:

CDP - Customer Data Platform

A customer data platform is a marketing system that unifies a company’s customer data from marketing and other channels to optimize the timing and targeting of messages and offers. A Customer MDM platform can support a CDP by ensuring the customer data that is consumed by the CDP is updated and accurate and by uniquely identifying customers. The CDP can manage large quantities of customer data while the MDM ensures the quality of that data. By linking the CDP data to other master data, such as product and supplier data, the MDM can maximize the potential of the data.

See also: CRM, Customer MDM

More information:

Centralized Style

The centralized implementation style refers to a certain system architecture in which the MDM system uses data governance algorithms to cleanse and enhance master data and then pushes data back to its respective source system. This way, data is always accurate and complete where it is being used. The Centralized style also allows you to create master data making your MDM the system of origin of information. This means you can centralize data creation functions for supplier, customer and product domains in a distributed environment by creating the entity in MDM and enriching it through external data sources or internal enrichment.

See also: Implementation styles, Coexistence style, Registry style, Consolidation style

More information:

Change Management

Change management is the preparation and education of individuals, teams and organizations in making organizational change. Change management is not specific to implementing a master data management solution, it is, however, a necessity in any MDM implementation if you want to maximize the ROI. Implementing MDM is not a technology fix but just as much about changing processes and mindsets. As MDM aims to break down data silos, it will inevitably raise questions about data ownership and departmental accountabilities.

More information:

Cloud MDM

A hosted cloud MDM solution is being run on third-party servers. This means organizations don’t need to install, configure, maintain and host the hardware and software, which is outsourced and offered as a subscription service. Cloud MDM holds many operational advantages, such as elastic scalability, automated backup and around-the-clock monitoring. Most companies choose a hosted cloud MDM solution vs. an on-premises solution or choose to migrate their solution to the cloud.

See also: SaaS

More information:

Coexistence Style

The coexistence implementation style refers to a system architecture in which your master data is stored in the central MDM system and updated in its source systems. This way, data is maintained in source systems and then synchronized with the MDM hub, so data can coexist harmoniously and still offer a single version of the truth. The benefit of this architecture is that master data can be maintained without re-engineering existing business systems.

See also: Implementation styles, Centralized style, Registry style, Consolidation style

More information:

Composable Commerce

Composable commerce is an innovative approach to digital commerce characterized by a modular architecture consisting of independent components. These components can be flexibly assembled to tailor-fit a business's unique requirements. Building upon the concept of headless commerce, which involves decoupling the customer-facing technology layer from backend systems, composable commerce takes this separation to the next level. It prioritizes heightened control and adaptability to curate an enhanced digital experience for consumers.

See also: Headless Commerce

Connector

Connectors are application-independent programs that perform transfer of data or messages between business systems or external sources and applications, such as connecting your MDM platform to your ERP, analytics or to data validation sources or marketplaces. Connectors are a vital architectural component to centralize data and automate data exchange.

See also: API

More information:

Consolidation Style

The consolidation style refers to a system architecture in which master data is consolidated from multiple sources in the master data management hub to create a single version of truth, also known as the golden record.

See also: Implementation styles, Centralized style, Registry style, Coexistence style

More information:

Contextual Master Data

Contextual (or situational) master data management refers to the management of changeable master data as opposed to traditional, more static, master data. As products and services get more complex and personalized, so does the data, making the management of it equally complex. The dynamic and contextual MDM takes into consideration that the master data, required to support some real-world scenarios, changes. For example, certain personalized products can only be created and modeled in conjunction with specific customer information.

More information:

CRM - Customer Relationship Management

CRM is a system that can help businesses manage customer and business relationships and the data and information associated with them. For smaller businesses a CRM system can be enough to manage the complexity of customer data, but in many cases organizations have several CRM systems used to various degrees and with various purposes. For instance, the sales and marketing organization will often use one system, the financial department another, and perhaps procurement a third. MDM can provide the critical link between these systems. It does not replace CRM systems but supports and optimizes the use of them.

See also: ERP, Customer MDM

More information:

Customer MDM

Customer master data management is the governance of customer data to ensure unique identification of one customer as distinct from another. The aim is to get one single and accurate set of data on each of your business customers, the so-called 360° customer view, across systems and locations in order to get a better understanding of your customers. Customer MDM is indispensable for all systems that need high-quality customer data to perform, such as CRM or CDP. Customer MDM is also vital for compliance with data privacy regulations.

See also: CRM, CDP, GDPR

More information:

DaaS - Data as a Service

Data as a Service is a cloud-based data distribution service focused on the real-time delivery of data at scale to high-volume data-consuming applications. It is an always-on service that allows applications to pull in data when it is needed to internal applications or customer facing channels wherever it is needed in the world. As part of MDM, DaaS delivers a near real-time version of master data through a configurable API and serverless architecture. This removes the need to maintain several API services as well as the need to create multiple copies of data for each application.

See also: SaaS

More information:

- Master Data Management with a DaaS Extension for Data Consumption at High Scale

- Making Master Data Accessible: Wha is Data as a Service?

DAM - Digital Asset Management

Digital asset management (DAM) is the repository and management of digital assets, such as images, videos, digital files and their metadata. Important DAM capabilities include metadata management, version control, classification, linking, search and filter, workflow management and user-friendly asset import. Many businesses have a stand-alone DAM solution. When digital assets are needed for ecommerce, retail or distribution, the DAM needs to be integrated with the product information management system in order to not delay processes and cause bad user experiences. Master data management (MDM) supports digital asset management by connecting digital assets to appropriate master data records. DAM can be an integrated function in MDM, making it easy to accurately associate digital assets with individual products.

More information:

- What is Digital Asset Management?

- Deliver a Great Experience with a Strategic Approach to Digital Asset Management (DAM)

Data Analytics

Data analytics is the discovery of meaningful patterns in data. For businesses, analytics are used to gain insight and thereby optimize processes and business strategies. MDM can support analytical efforts by connecting data, upgrading the quality of data across the organization and by providing organized master data as the basis of the analysis. Furthermore, MDM data can be shared to business intelligence solutions to help provide a common corporate data framework and hierarchies for business data analysis and fuel future developments in AI.

More information:

Data Augmentation

Data augmentation is a technique used in data science and machine learning to increase the size and diversity of a data set by creating new samples from existing data through modifications or transformations. The goal of data augmentation is to enhance the quality and robustness of the data and improve the accuracy and generalization of machine learning models. Master data augmentation refers to the process of enriching or expanding the existing master data of an organization by integrating new or external data sources. This can involve adding new attributes or fields to the existing master data, as well as updating or enriching the existing attributes with additional information. The goal is to improve the accuracy, completeness and relevance of the master data to enable better decision-making and execution of business processes. By incorporating new data sources and expanding the scope of the master data, organizations can gain a more comprehensive view of their operations and assets.

See also: Augmented MDM

More information:

Data Catalog

A data catalog is a tool that provides an organized collection of metadata, descriptions and information about the data assets available within an organization. A data asset can refer to any type of structured or unstructured data that is generated, collected or maintained by an organization. The purpose of a data catalog is to make it easier for users to discover, understand and access data assets. The catalog typically provides a searchable and browsable interface that allows users to find and explore data assets based on various attributes, such as name, type, format, owner, and usage. Master data management (MDM) and data catalogs are complementary technologies that can work together to improve data management practices. MDM provides a centralized repository for managing the most important data assets, such as customer and product data, while a data catalog provides an interface for discovering and accessing data assets across the organization.

Data Cleansing

Data cleansing is the process of identifying, removing and correcting inaccurate data records, for example by deduplicating data. A data error that can appear during data cleansing is one of validity. Each piece of data aligned to a specific attribute should conform to the rules of the attribute. Moreover, consistency is another critical aspect affected by poor data cleansing practices. As brand ranges grow, the consistency of product or brand descriptions for attributes should remain the same or evolve together for brand consistency. Cleansing data is an integral and basic process of master data management, as it eliminates the problems of useless data and enhances the overall quality and reliability of information within the company. By addressing both validity and consistency issues during data cleansing, organizations can improve their ability to make data-driven decisions and delivering great customer experiences.

See also: Deduplication

Data Democratization

Democratizing data means to make data available to all layers of an organization and its business ecosystem, across departmental boundaries. Hence, the opposite of data democratization is to store data in silos managed and controlled by a single department without visibility for others. Data democracy’s upside is to remove barriers for the talent in your organization by providing the right access of data to enable timely and informed decision making. Data democratization is only possible if data is transparent. That includes its quality and sources, how it is shared and used, and who is accountable for the data quality as well as for the interpretation of the data.

See also: Data silo, data transparency

More information:

Data Domain

In master data management, a data domain refers to a specific set or category of data that share common characteristics, such as data type, purpose, source, format, or usage. Data domains are often used to organize and categorize data assets within an organization, and they play a critical role in data governance and data quality management. For example, the customer data domain includes data such as name, address, email, phone number and demographic information. Another example could be product data, including attributes such as size, color, function, technical specifications and item number. Different domains can be governed in conjunction and thus provide new valuable insights, such as where and how a product is used. By defining data domains and their attributes, organizations can establish a common understanding of their data and ensure consistency, accuracy, and completeness across different systems and applications. This, in turn, can help organizations make better data-driven decisions, improve operational efficiency, and comply with regulatory requirements.

See also: Multidomain, Data governance, MDM, PIM, Zones of Insight

More information:

Data Enrichment

Data enrichment refers to the process of enhancing, refining or improving existing data sets by adding new information, attributes or context to them. The goal of data enrichment is to increase the value, relevance and accuracy of the data for analysis, decision-making and improving customer experiences. Product data enrichment is particularly important for providing good customer experiences and preventing product returns. Customer data enrichment can help companies gain a more comprehensive view of their customers. Data enrichment can involve various techniques and sources, such as: - Data augmentation: adding new data points or fields to existing data sets, such as demographic, geographic, behavioral or transactional data. - Data integration: combining data from multiple sources or systems to create a unified view of the data.

Data Fabric

Data fabric is a data architecture that connects and enhances your operating systems to provide these systems with clean, real-time data at scale. It’s an integrated layer on top of systems that these systems can subscribe to. It is designed to provide a unified view of data that can be accessed and used by different applications and users within an organization. Data fabric improves collaboration through a common shared data language and democratization of data. A multidomain master data management platform can facilitate a data fabric through integration of data sources and built-in data governance. However, data fabric extends beyond the capabilities of a single technology. It typically includes a variety of technologies and components, including data virtualization engines, data integration tools, metadata management systems and data governance policies. These components work together to provide a comprehensive view of data that can be accessed and used by different stakeholders. One of the key benefits of a data fabric is that it enables organizations to access and analyze data in real-time, regardless of where the data is located or how it is stored.

Data Governance

Data governance is a collection of practices and processes aiming to create and maintain a healthy organizational data framework. It is a set of policies, procedures and guidelines that are put in place to ensure that data is accurate, consistent and compliant with regulations and standards. Data governance can include creating policies and processes around version control, approvals, etc., to maintain the accuracy and accountability of the organizational information. Data governance is as such not a technical discipline but a discipline to ensure data is fit for purpose. Data governance includes a variety of activities, such as data quality management, data security, data privacy, data classification, data lifecycle management and data stewardship. These activities are designed to ensure that data is properly managed and used throughout its lifecycle, from creation to deletion. Data governance is typically led by a data governance team or committee that is responsible for creating and enforcing data policies and standards. This team may include representatives from various departments within an organization, such as IT, legal, compliance and business operations. Data governance can be supported by master data management capabilities that are configurable to execute data policies.

More information:

- What Is Master Data Governance – And Why You Need It?

- How to Develop Clear Data Governance Policies and Processes for Your MDM Implementation

- Ten Useful Steps to Master Data Governance

- Master Data Governance Library

Data Governance Framework

A data governance framework is a collection of processes, policies, standards, and metrics that ensure effective and efficient use of information. It includes the organizational structure, roles and responsibilities, and the tools and technologies used to manage and protect data. See also: Data Policy

More information:

- The Ultimate Guide to Building a Data Governance Framework

- Creating a successful data governance operating model

- Master Data Management Framework: Get Set for Success

- What is a Data Quality Framework?

Data Hierarchy

In the context of master data management (MDM), data hierarchy refers to the organization and classification of data elements based on their level of importance and their relationships to each other. At the top of the data hierarchy are the master data domains. These are the core data entities, such as customers, products, suppliers and employees. Beneath the master data domain, e.g., product, are the different product types and classes. Under these you will find the stock keeping units (SKU) and their attributes. At the bottom of the data hierarchy is the reference data. Reference data includes categories like product classifications, industry codes and currency codes. Master data management helps organize data into logical hierarchies which is essential to become a data-driven organization.

See also: Data Modeling, Data Domain, Reference Data

Data Hub

A data hub, or an enterprise data hub (EDH), is a database which is populated with data from one or more sources and from which data is taken to one or more destinations. A master data management system is an example of a data hub, and therefore sometimes goes under the name master data management hub.

More information:

Data Integration

Data integration is the process of combining data from different sources into a unified view. It involves transforming and consolidating data from various sources such as databases, cloud-based applications and APIs into a single, coherent dataset. One of the biggest advantages of a master data management solution is its ability to integrate with various systems and link all of the data held in each of them to each other. A system integrator will often be brought on board to provide the implementation services.

See also: API

More information:

Data Lake

A data lake is a central place to store your data, usually in its raw form without changing it. The idea of the data lake is to provide a place for the unaltered data in its native format until it’s needed. Data lakes allow organizations to store both structured and unstructured data in a single location, such as sensor data, social media data, weblogs and more. The data can be stored in different formats. Data lakes are highly scalable, allowing organizations to store and process vast amounts of data as needed, without worrying about storage or processing limitations. Certain business disciplines, such as advanced analytics, depend on detailed source data. However, since the data in a data lake has not been curated and derives from many different sources, this introduces an element of risk when the ambition is data-driven decision making. Data that is stored in a data lake is not reconciled and validated and may therefore contain duplicate or inaccurate information. Applying master data management can improve the accuracy and help identify relationships and thus enhance the transparency.

See also: Data Warehouse

Data Lineage

Data lineage refers to the detailed history of the data's life cycle, including its origins, transformations, and movements over time. It helps in understanding the data flow from its source to its final destination, providing transparency and aiding in data quality management, regulatory compliance, and troubleshooting.

Data Maintenance

In order for any data management investment to continue delivering value, you need to maintain every aspect of a data record, including hierarchy, structure, validations, approvals and versioning, as well as master data attributes, descriptions, documentation and other related data components. Maintenance is often enabled by automated workflows, pushing out notifications to, e.g., data stewards when there’s a need for a manual action. Maintenance is an important and ongoing process of any MDM implementation.

Data Mesh

Data mesh is an approach to data architecture that emphasizes the decentralization and democratization of data ownership and governance. It aims to address some of the common challenges of traditional centralized data architectures, such as slow time-to-market, siloed data and lack of agility. Traditional data storage systems can continue to exist but instead of a centralized data team that owns and governs all data, data mesh advocates for data ownership to be distributed among cross-functional teams that produce and consume data. This federated system helps eliminate many operational bottlenecks. The key idea behind data mesh is to treat data as a product and apply product thinking principles to data management. This implies clear product ownership, product roadmaps and product metrics. Data products should be designed to meet the specific needs of consumers, and should be evaluated based on their value to the business. While data mesh and master data management have different approaches to data management, they can complement each other in a hybrid data management approach. For example, domain-specific teams in a data mesh architecture can use MDM to ensure that their data products are consistent with enterprise-wide master data standards. In this way, data mesh and MDM can work together to ensure that data is accurate, consistent, and trustworthy across the enterprise.

See also: MDM

Data Modeling

Data modeling is the process of creating a conceptual or logical representation of data objects, their relationships and rules. It is used to define the structure, constraints and organization of data in a way that enables efficient data management and analysis, as well as supports your business model. Data modeling is an essential component of master data management (MDM) as it provides a principle for organizing and managing master data. In MDM, data modeling is used to define the attributes, relationships and hierarchies of master data entities such as customers, products and locations. It also helps in identifying and resolving data conflicts and duplicates by establishing unique identifiers and rules for data matching and merging. Data modeling in master data management is a process in the beginning of an MDM implementation where you accurately map and define the relationship between the core enterprise entities, for instance your products and their attributes. Based on that you create the optimal master data model that best fits your organizational setup.

Data Monetization

Data monetization is generally understood as the “process of using data to obtain quantifiable economic benefit” (Gartner Glossary, Data Monetization). There are three typical ways to achieve this:

- Using data to make better decisions and thus enhance your business performance

- Sharing data with business partners for mutual benefit

- Selling data as a product or service

Whichever way you choose, data monetization gets more profitable if you can provide context to the data and make it insightful. The more insight your data provides, the more valuable it is. Multidomain master data management can help add context and that way increase the value of your data.

More information:

Data Object

The data object is what you aim to enrich and identify uniquely using master data management (MDM). A data object has a unique ID and a number of attributes that it may share with other data objects, such as a specific customer that may share the address with another specific customer. Products, suppliers, customers and locations are examples of types of data objects in a master data management context. They are organized in data models that can contain relations between data objects. A multidomain MDM can show relations across data object types.

See also: Data Modeling

Data Onboarding

Data onboarding is the process of transferring data from an external source into your system, e.g., product data from suppliers or content service providers to your PIM system or from an offline database to an online environment. The onboarding process can be be handled by data integration via an API, a data onboarding tool or by importing xml files. The speed of data onboarding can be crucial for reducing your time to market. Sophisticated onboarding processes can help maintain data quality by flagging duplicates or incomplete data. If your onboarding tool is supported by AI, it may suffice to onboard fragments of information, such as an item number or GTIN number and a brand name.

Data Policy

Your data policy is a set of rules and guidelines for your data management to ensure data quality as well as data processes are aligned with business goals. Your data policy defines data ownerships, stewardships, how to store and share data. A thorough data policy should be in place prior to any system implementation in order to ensure accountability and that data is clean and fit for purpose.

Data Pool

A data pool is a repository of data where trading partners can maintain and exchange information about products in a standard format. Suppliers can, for instance, export data to a data pool that cooperating retailers can then import to their ecommerce system. Data pools, such as 1WorldSync and GS1, enable business partners to synchronize data and reduce their time to market. An MDM system that supports various data pool formats can facilitate the data exchange and minimize the manual work needed.

See also: GS1

More information:

- Connect your applications to take your data further, faster

- Achieve Effortless Compliance with Industry Standards

Data Quality

Data quality, or just DQ, refers to the overall accuracy, completeness, consistency, timeliness and relevance of data. The quality of data can have a significant impact on the accuracy and effectiveness of decision-making processes, as well as on the success of business operations that rely on data.

The quality of data is therefore of particular interest for data analysts and business intelligence. Accuracy refers to the extent to which data reflects reality, and it involves ensuring that the data is correct and free from errors. Completeness refers to the extent to which all necessary data is available, and there are no missing values. Consistency refers to the extent to which data is uniform and follows predefined standards, so it can be compared or analyzed. Timeliness refers to the extent to which data is available when it is needed and reflects the current state of affairs. Finally, relevance refers to the extent to which data is useful and applicable to a specific task or purpose.

100% data accuracy is in most cases not attainable, and also not desirable. Most organizations aim for the data quality that is 'fit for purpose'. The finest job for master data management is to ensure the quality of master data to enable qualified decision making.

Data Silo

The term data silo describes when crucial data or information — such as master data — is stored and managed separately and isolated by individuals, departments, regions or systems. The siloed approach results in data being not easily accessible or shared with other parts of the organization. In other words, data silos are a barrier that prevents the free flow of information within an organization. Data silos are often created unintentionally as different departments adopt different software systems or databases to manage their data. These silos can make it difficult for other departments to access important information, which can lead to redundant efforts, inconsistencies and inaccurate or incomplete data analysis. Master data management can help mitigating the negative impact of data silos by way of integration with business applications, such as ERPs and CRMs, thus providing a single source of truth.

More information:

- Data Silos Are Problematic. Here's How You Turn them into Zones of Insight

- Connecting Siloed Data with Master Data Management

Data Swamp

A data swamp occurs where a large volume of data is collected and stored without proper organization, management or governance. It is a data repository that is poorly managed and lacks structure, making it difficult or impossible to use for analysis or decision-making. The cause of a data swamp is when organizations collect data without a clear understanding of how it will be used or how it fits into the organization's broader goals and objectives. The consequences of a data swamp can include wasted resources, reduced productivity and even regulatory issues as data that is poorly managed may be subject to compliance violations or data breaches. To prevent a data swamp, organizations must adopt a data management strategy that includes proper data governance, metadata management and data quality controls. The capabilities of master data management support streamlining of data to make it trustworthy and actionable.

See also: Data Lake

Data Synchronization

Synchronization of master data is the process of ensuring that data is consistent and up-to-date across multiple systems or devices. Master data management ensures that all users have access to the most recent and accurate master data. Data synchronization is crucial in situations where multiple users or systems need to access and modify the same data, such as in a collaborative work environment or a mobile application that accesses data from a central server.

Data Syndication

Syndicating data is important for manufacturers and brand owners in order to share accurate, channel-optimized product data and content with retailers, distributors, data pools and marketplaces. Data syndication entails mapping, transforming and validating data. A master data management solution can automate the syndication process using built-in support for industrial classification standards or an integrated data syndication tool that is flexible to adapt to the retailer requirements as they change over time.

More information:

Data Transparency

Data transparency refers to the end-to-end insight into your most valuable data. It involves breaking down silos and barriers that obstruct the visibility, clarity and flow of trusted data. Data transparency includes knowledge about your data's completeness, where it comes from, who can access it, who is accountable for it, etc. Having data transparency enables you to comply with data regulations and standards and make better decisions based on insight. This insight is particularly important in order to meet the growing sustainability demands.

More information:

- How Data Transparency Enables Sustainable Retailing

- Achieving Supply Chain Transparency with Supplier Data

Data Warehouse

A data warehouse is a large, centralized repository of data that is used for reporting, analysis, and business intelligence. It is designed to store and manage data from various sources in a structured way, making it easier to access and analyze. The purpose of a data warehouse is to provide a single source of accurate, consistent and integrated data that can be used to make informed business decisions. Data warehouses are typically designed to support complex queries and analysis, rather than simple transaction processing. They are often used by businesses to consolidate data from disparate sources, such as transactional databases, customer relationship management systems and other sources, into a single location that can be easily accessed and analyzed. A master data management solution enhances the functionality of data warehouses by feeding trusted data into the database and by uniquely identifying entities.

See also: Data lake

Deduplication

Deduplication of data entities is the process of identifying and removing duplicate or redundant data from a dataset. This process is important because duplicate data can slow down processing times, lead to inconsistencies in analysis or reporting and cause bad customer experiences. Deduplication can be done manually, by comparing and eliminating duplicates, or it can be automated using software tools. These tools use algorithms to identify duplicates based on various criteria, such as exact or partial matching, fuzzy matching or machine learning. Master data management (MDM) can help with deduplication by providing a centralized system for managing and maintaining a single, authoritative source of master data. Through automated processes of matching and linking, MDM establishes a master record (i.e., golden record) for each entity and ensures that data is consistent, accurate, and up-to-date across all systems and applications. MDM includes tools for data profiling, cleansing, and matching, which can help identify and eliminate duplicates. For example, MDM can use algorithms to compare and match data from different sources, identifying duplicates based on various criteria such as name, address, phone number or other identifying attributes. Deduplication can be done for product records as well. Through deduplication, MDM enhances the functionality of business systems, such as CRM, customer support and ecommerce. MDM can also help prevent the creation of duplicate data in the first place. By enforcing standard data entry rules and using data validation techniques, MDM can ensure that new data entered into the system is accurate and consistent with existing data. Deduplication improves data quality, reduces storage costs and increases efficiency.

See also: Golden Record, Data Cleansing

More information:

Digital Catalog

A digital catalog is an electronic version of a product catalog or service catalog. It is a database or repository of information about products or services, including descriptions, specifications, prices, images, and other details. A digital catalog can be used by businesses to showcase and promote their products or services online, making them accessible to a global audience. Customers can browse the catalog, search for specific products or services, view images and descriptions and make purchases directly through the website. Digital catalogs are often used in ecommerce, and other industries that rely heavily on digital marketing and sales. They can be easily updated and maintained, making it possible to add new products or services, update prices and specifications and make other changes as needed. Master data management for product information (PIM) provides a single source of truth for product data, which can be used to populate a variety of channels, such as digital catalogs, ecommerce sites, marketplaces and other sales and marketing channels. Thus, master data management is the necessary foundation for a well-functioning digital catalog.

See also: PIM, Data Syndication

Data Marketplace

A data marketplace is an online platform where data providers can sell or share data with data consumers. It facilitates the exchange of data between organizations, helping them access a broader range of data sources for analytics and decision-making.

Data Masking

Data masking is a technique used to protect sensitive data by replacing it with realistic but false data. It is commonly used to secure data in non-production environments and ensure privacy while preserving the data's usability for testing and development.

See also: Synthetic Data

Digital Product Passport

Digital product passports is a sustainability requirement proposed by the European Union under the Ecodesign for Sustainable Products Regulation (ESPR) to “make sustainable products the norm […] and ensure sustainable growth.” The passport creates a digital record of data relating to all aspects of the lifecycle of a product in order to capture the environmental impact from the supply chain which accumulates from multiple suppliers and their actions, as well as themes pertaining to the product’s afterlife, such as disposal, recyclability, recovery, refurbishment, remanufacturing, predictive maintenance and reuse. Master data management can support the creation of digital product passports by managing sustainability data from multiple domains, including suppliers, locations and products.

More information:

Digital Profiling

Data profiling is the process of examining the data available in an existing data source and collecting statistics and information about that data. It helps in assessing the quality of data, identifying anomalies, and understanding data distributions, patterns, and potential relationships.

Digital Transformation

Digital transformation is the process of using digital technologies to fundamentally change how businesses operate, interact with customers and create value. It involves the integration of digital technologies into all aspects of a business, from operations and customer experience to products and services. The goal of digital transformation is to improve business performance, increase efficiency and create new revenue streams. Digital transformation is driven by consumer demands, forcing organizations to change their business strategy and thinking in order to deliver excellent customer experiences, or by increasing demands for compliance or the general deluge of data generated by IoT and various platforms. Hence, digital transformation is a necessity but also a competitive parameter as it has major impact on efficiency and workflows.

Master data management (MDM) can play a crucial role in enabling digital transformation as the backbone architecture that ensures data is trustworthy and fit for purpose. Master data management (MDM) can support digital transformation by providing a centralized system for managing and maintaining a single, authoritative source of data. By establishing a trusted master record for each entity, MDM can ensure that data is consistent, accurate and up-to-date across all systems and applications. Thus, MDM is a key enabler of digital transformation, as it provides the foundation for data-driven decision making, process automation and customer engagement. By using a centralized MDM system, businesses can ensure that all stakeholders have access to the data they need for their transformational process in a timely and reliable manner. MDM can also help businesses improve operational efficiency by automating manual processes and reducing the time and resources required for data management. By streamlining data workflows and ensuring data quality, MDM can help businesses improve productivity, reduce errors and enhance collaboration.

More information:

Digital Twin

A digital twin is a virtual representation, or data replication, of an entity such as an asset, product, person or process and is developed to support business objectives. The digital twin of a person is also referred to as a customer 360° view. For manufacturers, the digital twin can visually conceptualise their real-world manufacturing processes. Digital twin describes a transition of the physical product to the virtual product in order to ensure that what companies are producing is what they actually want to produce. Master data management supports the building of digital twins by providing a repository for rich and accurate data about assets.

Direct to Consumer (D2C, DTC)

D2C selling relates to manufacturers and brands that in addition to selling through retailers and dealers establish their own retail sales channels, such as ecommerce or brick-and-mortar shops. While the D2C approach can be profitable and good for brand building, it also challenges brands to manage their product data to make it fit for great consumer experiences and omnichannel purposes.

More information:

D-U-N-S

A Data Universal Numbering System number is a unique nine-digit identification number assigned to businesses and organizations by Dun & Bradstreet (D&B), a provider of commercial data, analytics, and insights. The D-U-N-S number is commonly used by lenders, suppliers and business credit reporting agencies to establish a company's creditworthiness and financial stability. The D-U-N-S number is also used by various organizations and government entities to identify and verify a business's existence and location. Master data management (MDM) can source the D-U-N-S number via integration to enrich a company's party data with unique identifiers.

More information:

EAM - Enterprise Asset Management

Enterprise Asset Management (EAM) is the process of managing an organization's physical assets throughout their lifecycle, from acquisition to disposal. EAM is typically used by organizations with large and complex physical assets, such as manufacturing plants, transportation companies, and utility providers. EAM software is used to track and manage assets such as machinery, equipment, vehicles, and buildings. It provides a centralized database for asset information, including maintenance schedules, warranties and repair histories. The software can also generate reports and analytics to help organizations optimize their asset utilization, reduce maintenance costs, and extend asset lifespan.

Master data management (MDM) can help describe how assets perform and relate functionally. MDM is inherently designed to cross silos of systems, business units and processes in order to unite information in a way that supports decision making. MDM is therefore positioned as a technology that can help unify and standardize views of asset information to help reduce the number of processes that an organization must work with.

See also: Asset Data

More information:

ECLASS

The global industry standard for classifiation and description of products and services across countries and multiple languages. The classification system is maintained by industry consortium ECLASS e.V. association. ECLASS enables digital exchange of product master data. Every product and service is classified in a four-level hierarchy and identified by an eight-digit code. ECLASS is used in Product Information Management (PIM), ecommerce and master data management systems.

More information:

EDW

See Data Warehouse

Enterprise Data

Enterprise data refers to all the data that is created, processed and used within an organization. This includes data from various sources, such as internal systems, external partners, customers and other stakeholders. Enterprise data can take many forms, such as structured data (e.g., databases, spreadsheets), unstructured data (e.g., emails, documents), semi-structured data (e.g., XML files) and multimedia data (e.g., images, videos).

By implementing a master data management strategy, organizations can improve the accuracy, consistency and completeness of their master data across different systems and business units. This can help create context for all enterprise data, improving analytics and decision making.

ERP - Enterprise Resource Planning

Enterprise resource planning (ERP) is a type of business management software that integrates and manages an organization's core business processes in real-time. ERP systems are designed to provide a unified view of an organization's operations and data. ERP systems typically include modules for various business functions such as finance, accounting, inventory management, human resources, procurement and customer relationship management. These modules are integrated into a single system, which enables data sharing and visibility across different departments and functions. Businesses can have several ERP systems.

A master data management solution can enhance the functionality of the ERP by ensuring that the data from each of the data domains used by the ERP is accurate, up-to-date and synchronized across the multiple ERP instances. Although ERP systems also manage master data, they do not have the same comprehensive governance capabilities as MDM systems.

ETIM

ETIM is an industry classification system that stands for "Electro Technical Information Model". It is a standardized classification system for technical product data in the electrical, plumbing, heating, ventilation and air conditioning (HVAC) industries. The ETIM system provides a framework for classifying technical information about products in a standardized format that can be easily shared among manufacturers, distributors, retailers and end-users. The ETIM classification system is based on a hierarchical structure, with each level providing more detail about the product. ETIM also includes a standardized set of product attributes and values that can be used to describe the features of a product in a consistent way. This allows different manufacturers to use the same terminology to describe their products, making it easier for customers to compare and choose products.

Via integration or built-in ETIM compliance, a master data management platform can further enrich product information and facilitate data sharing between manufacturers and distributors/retailers.

More information:

ETL - Extract, Transform and Load

ETL (Extract, Transform, Load) is a data integration process used to move data from multiple sources, transform it into a consistent format, and load it into a target database or data warehouse. The three stages of ETL are:

Extract: Data is extracted from various sources such as databases, flat files, APIs or web services.

Transform: The extracted data is cleaned, normalized and transformed into a consistent format to ensure that it is accurate, complete and usable.

Load: The transformed data is loaded into a target database or data repository where it can be analyzed and used for various purposes, such as business intelligence reporting, analytics or master data management.

GDPR

The General Data Protection Regulation (GDPR) is a binding regulation created by the European Commission. The regulation, which came into effect on the 25th of May 2018, has replaced former European Union data protection directives and diverse national laws. The GDPR was introduced in order to strengthen the citizens' right to data protection, including the right to be forgotten, the right to access your own personal data, the right to data portability, to rectification and to object. Affected businesses have to meet several requirements in relation to how they collect and use the personal data of EU citizens – whether or not the company itself is European.

Master data management supports compliance with the GDPR by consolidating party data, providing transparency into data processing and by supporting consent management.

Generative AI

Generative AI refers to artificial intelligence techniques that are capable of generating new data or content based on patterns learned from existing data. It can be used in tasks such as language generation, image synthesis and code generation. Retailers can use generative AI to create compelling and unique descriptions for products. This is particularly useful for ecommerce websites or businesses with large product catalogs, where writing descriptions for each product can be a time-consuming and tedious task. With generative AI, a machine learning model can be trained on a dataset of existing product descriptions, allowing it to learn the patterns and structures commonly used in these descriptions. In order to get a satisfactory outcome of generative AI, it is therefore important that the underlying product data is governed and trustworthy.

More information:

- Generative AI for Product Information Needs Governed Data

- Questionable data. Questionable decisions. Questionable business.

- What is Product Master Data Management?

Golden Record

The golden record is the consolidated master data record of a customer, supplier or product based on the most trusted information. This is also referrred to as a single view, which consists of one unified, trusted version of data that captures all the necessary information needed to know about a customer or a product. It is very common for organizations to have several records of the same object sitting in the same or in different systems. Some of these records might be inaccurate or incomplete. Through data governance capabilities, such as matching, linking and merging, master data management (MDM) is capable of unifying information into one trusted version.

More information:

- What is a Golden Customer Record in Master Data Management?

- What Is Master Data Governance – And Why You Need It?

GRI

Global Reporting Initiative (GRI) is a sustainability standard that operates across more than 140 different topics across biodiversity, tax, waste, emissions, diversity, equality and health and safety. In order to comply with the GRI reporting, companies can use master data management to ensure data transparency, manage and share sustainability data.

More information:

GS1

GS1 is a global standards organization that develops and maintains a range of standards for the identification, capture and sharing of product and supply chain information. It is used by companies in a wide range of industries, including retail, healthcare, food service and manufacturing. The GS1 system enables companies to use a common language to identify products, locations, and assets, and to share data with trading partners in a consistent and standardized way. The GS1 standard includes a range of standardized identifiers, barcodes, and electronic data interchange (EDI) messages that enable companies to identify and track products as they move through the supply chain.

Some of the key components of the GS1 system include: Global Trade Item Number (GTIN), a unique identifier for products that is used to identify and track products at the item level; Global Location Number (GLN), a unique identifier for physical locations such as warehouses, retail stores, and manufacturing facilities; Global Data Synchronization Network (GDSN), a system that enables companies to share product information with trading partners in a standardized format, ensuring that everyone has access to the same information; and Electronic Product Code (EPC), a unique identifier for individual items that is encoded in an RFID tag, enabling companies to track items at the individual unit level.

A master data management solution will support and integrate the GS1 standards across industries.

See also: Data Pool

More information:

Headless Commerce

Headless commerce separates the customer facing front-end technology from the business systems on the back-end, to allow for increased flexibility. In other words, the back-end of commerce solutions are separated from the direct consumer experience. Headless commerce allows for a more focused approach to the customer experience and leverages integrations to the back-end to improve the ability to provide services. The information flows between the back-end and front-end via application programming interfaces (APIs). Product information management solutions (PIM) provides the foundational, enriched product data that serves as the single source of truth and may also include DAM that can share operational and marketing and rich product data across channels worldwide.

See also: Composable Commerce

Identity Resolution

Identity resolution is a data management process related to customer master data management. Customer master data management involves a data governance process that enables organizations to link customer data across disparate systems and sources to form a single, accurate and complete view of the customer. One key aspect of this process is identity resolution, which involves gathering different data sets and identifying non-obvious relationships to link a customer's traces and accounts to their unique identity. Customers often have various digital identities that need to be consolidated into one customer view. By successfully linking all these identities, identity resolution allows for customer-centric marketing.

See also: Customer MDM, Golden Record

More information:

Implementation Style

When you implement a master data management solution you can choose between four different methodologies, or styles, according to your business needs: (1) Registry, (2) Transaction/Centralized, (3) Consolidation or (4) Coexistence. The choice for each is depending on the business situation, the organization's technology, people and aspirations. Implementation styles may also difffer dependng on the type of master data.

More information:

IOT - Internet of Things

Internet of things is the network of physical devices embedded with connectivity technology which enables these devices to connect and exchange data. Devices include sensors, cameras, consumption meters and wearables. IoT can help companies maintain assets and equipment. A master data management solution supports IoT initiatives by providing a 360-degree view of the connected assets. Asset data management is important to provide context for IoT data, also known as sensor data or time series data.

More information:

- How to Leverage Internet of Things with Master Data Management

- Asset Data Governance is Central for Asset Management

Legacy Systems

An applied technology that has become outdated and has been taken over by newer operating systems, but is still in use for a variety of reasons. A legacy system is no longer for sale on the software marketplace and is not being updated or supported by its vendor. A master data management system can help in the retirement of many legacy systems by capturing and cleansing exising master data. Using the MDM approach means that a business can continue operations during a critical migration process and, furthermore, increase efficiency and data governance.

More information:

- Data Migration to SAP S/4HANA ERP - The Fast and Safe Approach with MDM

- Four Types of IT Systems That Should Be Sunsetted – & What to Consider

Location data

Data about locations. Locations, such as stores, warehouses, factories and other real estate, are assets that need accurate descriptions. Master data management can provide additional value by adding location data to the mix of master data, resulting in a multidomain solution. Product information can greatly benefit from being enriched with location data to provide insight into where a certain product is offered or from where it originates. Restaurants, hotels and venues can use location data management to advertise their location-based services. By enriching store location data with local points of interest information and localized consumer demographics, retailers can offer relevant products and services and update customers with the latest store information.

More information:

- Location, Location, Location: Managing WHERE You Do Business

- How Location Data Adds Value to Master Data Projects

- Restaurant Chain Combines Location and Product Data to Drive More Customer Engagement

Machine Learning

Machine learning (ML) is a subfield of artificial intelligence that focuses on enabling machines to learn from data without being explicitly programmed. It is a type of algorithm that uses statistical models to recognize patterns in data, and then uses those patterns to make predictions or decisions. ML creates a data model based on sample data in order to allow more data to be fed into the system for continous improvement. ML is being employed in master data management solutions to aid in reducing repetitive tasks such as classification, image metadata tagging and image deduplication.

Master Data

Master data is essential to the operation of a business or organization. It includes key business information, categorized in master data domains such as customer and product data, as well as other critical assets, including suppliers, locations and equipment. Master data is typically defined by a set of attributes describing the asset. Contrary to other types of data, e.g., transactional data or real-time data, master data is characterized by low volatility. Master data is often managed in many different systems according to domain, i.e., product master data is managed in a PIM system, and customer master data in a CRM. However, consolidating and governing master data in a single source can enhance insight and efficiency for departments and applications that rely on having clean master data. Effective management of master data is essential for ensuring data quality, consistency, and accuracy throughout the organization, and is key to supporting business processes and decision-making.

More information:

Master Data Management

Master data management (MDM) is the core process of acquiring, managing, governing, enriching and sharing master data according to the data policy and business rules of your company. The efficient management of master data in a central repository gives you a single authoritative view of information and eliminates costly inefficiencies caused by data silos. Master data management is necessary to support your business initiatives and objectives through identification, linking and syndication of information and content across products, customers, locations, suppliers, digital assets and more. Master data can be managed using spreadsheets or various business systems, such as ERP and CRM. However, to automate the process of managing master data and to ensure the uniformity of master data, many organizations use a dedicated MDM solution that is capable of connecting data silos and providing clean master data to the business systems that need it to operate efficiently.

More information:

Matching and linking

Matching and linking are two key processes in the creation of golden data records. Golden records are a single, accurate and comprehensive view of an entity, such as a customer or a product, that is created by combining and reconciling data from multiple sources. Matching involves identifying and grouping together records that refer to the same entity, based on a set of matching rules or criteria. These rules may include fields such as name, address and phone number, which are used to compare and match duplicate records from the same or different sources.

Linking refers to consolidating or merging the matched records into a single golden record. This involves resolving conflicts and inconsistencies between the records, and combining the information from each record into a single, comprehensive view of the entity. When all source records are linked to the golden record, the merging function selects a survivor and non-survivors. The golden record is based only on the survivor. The non-survivors are deleted from the system. Linking may also imply enriching the data by adding additional information from external sources.

The creation of golden records through matching and linking is an iterative process that requires ongoing monitoring and refinement to ensure accuracy and completeness. This process is typically supported by master data management (MDM) software, which provides tools and workflows for managing the creation and maintenance of golden records.

See also: Golden Record

More information:

- What Is Master Data Governance – And Why You Need It?

- The Complete Guide: How to Get a 360° Customer View

Metadata Management

The management of data about data. Metadata refers to data that describes other data. It is information that provides context, meaning and structure to data. Metadata can be used to facilitate the discovery, management and use of data, and is essential for ensuring that data is accurate, consistent and interoperable. There are many different types of metadata. Examples of metadata include the author and date of creation of a document, the file format and size, the data type and structure and the keywords and tags used to describe the content. Metadata can be stored and managed separately from the data itself, and can be used to facilitate search, discovery, and analysis of data.

While metadata management and master data management systems intersect, they provide two different frameworks for solving data problems such as data quality and data governance.

Multidomain

AA multidomain master data management solution manages the data of several enterprise domains, such as product and supplier domain or customer and product domain or any combination handling more than one domain. A multidomain MDM solution provides unified governance across all domains. Multidomain MDM allows you to establish relationships between data of different domain origins and bring governance across these relationships. The combination and common governance of different master data domains can provide new insights into business-critical questions, such as where certain products are sold, how they are used, and which suppliers are delivering the same product. These insights can help reduce costs and open up for new streams of revenue.

See also: Data Domain, Zones of Insight

More information:

- The Difference Between Multidomain and Multiple-Domain Master Data Management

- How to Turn Your Data Silos Into Zones of Insight

- Multidomain MDM Supports Data-Driven Growth and Operations at Rituals Cosmetics

Multiple Domain

A multiple-domain master data management solution manages master data from different domains in one application but has separate goverance for each domain. Unlike mulitdomain solutions, this type of solution may require significant coding or configurations to actually enable the true managemet of multiple domains possible.

More information:

Natural Language Processing

NLP is a field of artificial intelligence (AI) that focuses on enabling machines to understand and interpret human language. It involves developing algorithms and models that can analyze and generate natural language, such as text or speech, and perform language translation, sentiment analysis speech recognition and more. NLP has many applications in fields such as customer service, healthcare, finance and education. For example, it can be used to develop chatbots and virtual assistants that can interact with customers in natural language. Master data management (MDM) is important for NLP because NLP algorithms rely on accurate and consistent data to generate meaningful insights and results. NLP applications typically require access to large volumes of high-quality data, and MDM provides a framework for managing and governing this data to ensure that it is accurate, complete and consistent across different systems and applications.

Omnichannel

Omnichannel is a marketing strategy that relies on a single source pushing product data out to business systems (data silos) where the data sits and goes through individual transformation and cleaning. The focus is on providing customers with a consistent experience across all channels, including in-store, online, mobile and social media. It allows customers to switch between channels and touchpoints. In an omnichannel approach, each channel is managed separately, and the customer experience is optimized for each individual channel.

More information:

- Omnichannel Strategies for Retail

- What is Omnichannel Retailing and What is the Role of Data Management?

Party Data

In relation to master data management, party data is the overarching data domain referring to individuals and organizations, typically customers and suppliers, but also employees or college students. A party can also be a relation, such as an attorney or a family member of a customer, and party data is then data referring to these parties. Party data management can be part of an MDM setup and these relations can be organized using hierarchy management. Party data can also be defined by it's source. First-party data is your own data, second-party data is someone else’s first-party data handed over to you, while third-party data is collected by someone with no relation to you.

More information:

- What is Party Data? All You Need to Know About Party Data Management

- The 26 Questions to Ask Before Building Your Business Case for Customer Data Transparency

PII - Personally Identifiable Information

PII refers to any data that can be used to identify a specific individual. This can include a person's name, date of birth, social security number, driver's license number, passport number, email address, phone number or physical address. PII can be either sensitive or non-sensitive, depending on the context in which it is used. Sensitive PII includes information that, if disclosed, could result in harm or embarrassment to an individual, such as their financial information, medical records or personal relationships. Non-sensitive PII includes information that, by itself, is not considered harmful, but when combined with other information, could be used to identify an individual. Laws and regulations protecting data privacy, such as the GDPR, require organizations to take appropriate measures to safeguard PII and prevent unauthorized access or disclosure. Failure to protect PII can result in legal and financial consequences, as well as damage to an organization's reputation. Customer, or party data management can help organizations manage legal compliance with PII regulations, e.g., by applying data governance rules to data processing and by managing consent and maintaining a single source of truth.

More information:

PIM - Product Information Management

A PIM system is a software solution that is designed to manage and centralize product information, including product descriptions, attributes, images, videos and other related data. PIM systems are commonly used by ecommerce businesses, retailers and manufacturers to manage large volumes of product information across multiple channels, such as websites, mobile apps and marketplaces. By using a PIM system, organizations can ensure that product information is accurate, up-to-date and consistent across all channels, which can improve customer experience and increase sales.

Some of the key features of a PIM system may include: - Centralized product information storage and management - Workflow management for product data creation, review, and approval - Product data enrichment capabilities, such as the ability to add metadata, attributes, and digital assets - Data governance and data quality management tools to ensure accuracy and consistency of data - Integration with other systems such as ecommerce platforms, content management systems and ERP systems - Multi-language and multi-currency support - Analytics and reporting capabilities to track product performance and user engagement

Many companies use a product master data management solution to build their PIM system because of the product MDM's enhanced capabilities, such as management of large data volumes and a wide range of integration options.

More information:

Platform