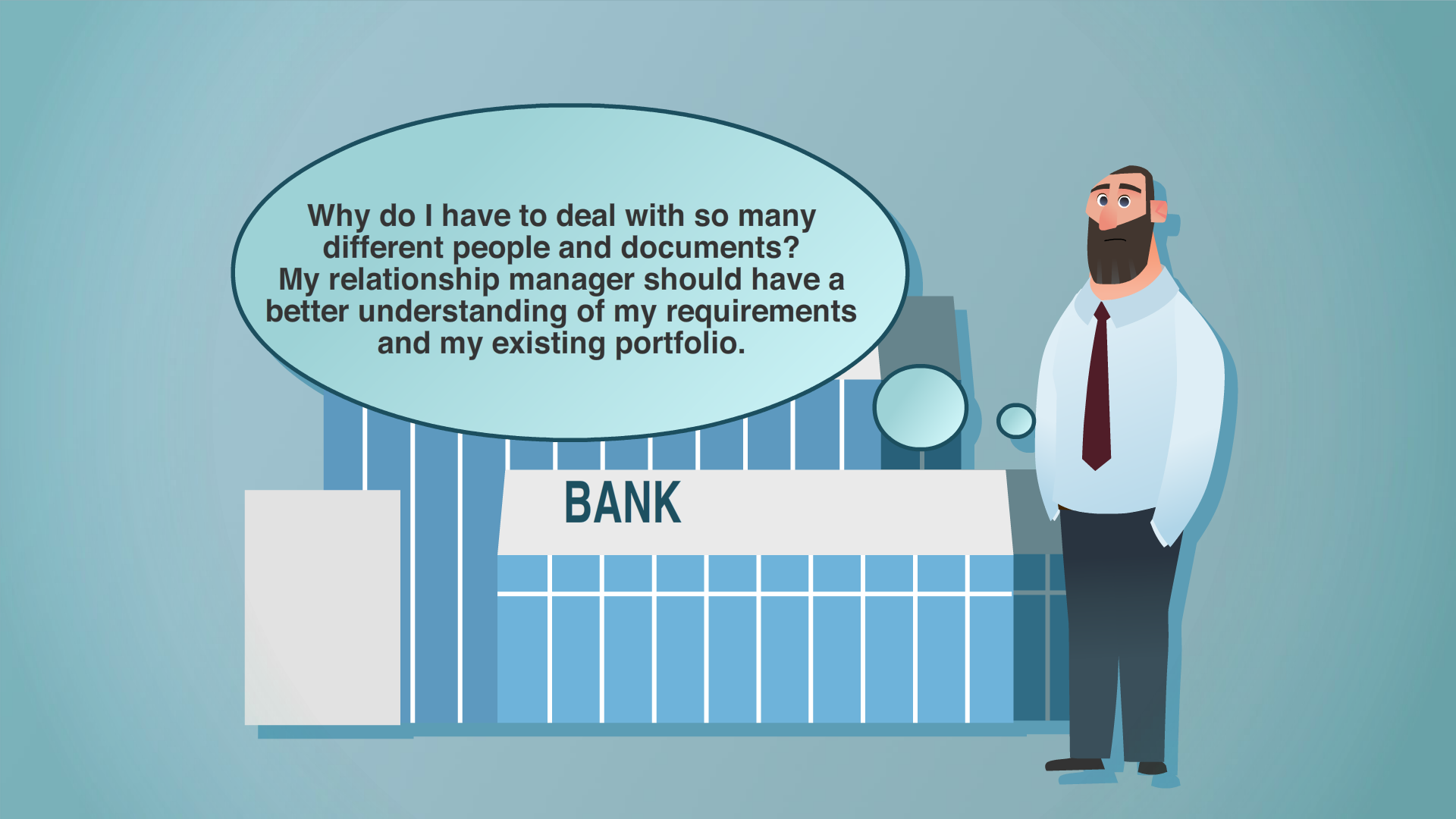

You have spent years mastering data quality and governance, and you have clean master records. But then your AI still makes decisions that contradict all of that work.

It's not your data that's failing. Your machines just don't understand what the data means. They don’t have the context to interpret it correctly.

Clean data provides the raw material. Without explicit meaning encoded into the structure itself, machines guess at relationships and constraints.

The complexity appears quickly. Your product data might have multiple dimensions, weights and voltages. Variants that aren't easy to understand just from the names. Which voltage specification applies to which variant?

Your AI has to guess.

So, it reaches conclusions that sound plausible but drift from what your enterprise intended. It’s more likely to miss constraints and increase governance risk.

A semantic data graph fixes this.

It represents master data as a network of entities and relationships enriched with explicit business meaning. Machines learn not just what your data contains, but also how it's meant to be used.

Why data quality isn't the same as data understanding at scale

Master data management (MDM) is essential. Data quality, harmonization, deduplication, governance… These are all non-negotiable foundations. Your AI cannot reason well about bad data.

But clean data only solves half the problem.

At enterprise scale, data quality and data understanding diverge. Clean data ensures correctness at the record level. But it does not explain:

- What the data means

- What you can safely use it for

- What you shouldn't use it for

Let's use an example:

Your product data is clean: Product A and Product B are distinct records, properly harmonized, fully governed. Your AI accesses this data without an issue. Yet when it needs to determine if Product B can substitute for Product A, it doesn't recognize that Product B requires a different voltage specification. The data was clean. But the relationship was invisible to the machine.

This is the core gap. Your AI infers meaning from naming patterns, data structure and statistical correlations. It works in limited scenarios. But with complex data, this approach breaks down. The AI reaches conclusions that sound plausible but drift subtly from enterprise intent. It violates governance. It misses constraints that should be obvious.

And the greater the complexity, the bigger the problem.

A single product with five attributes is manageable. But if it has 50 attributes – some of which are variants, some of which are hierarchical – you need semantics to navigate it correctly.

You need explicit meaning. The business definitions, relationships and constraints that give your data purpose and direction. When your AI understands what the data actually means and how it should be used, it makes better decisions. Decisions that align with what your business knows.

And that is exactly what you get with semantic data graphs.

What semantic data graphs are and why they are so important

A semantic data graph represents your master data as a network of entities and relationships enriched with explicit business meaning. It tells machines not just what your data contains, but how it's meant to be used.

Think of it as a machine-readable model of your business.

- Business definitions (what "supplier" means in your context, what "available for sale" really signifies)

- Relationships and hierarchies (how products link to suppliers, customers connect to contracts)

- Constraints and rules (which supplier-region combinations are permitted, which certifications are non-negotiable)

- Governance metadata (who owns what, where changes are allowed, what lifecycle states matter)

The critical piece is natural language.

When you label a relationship "supplier is geographically restricted to," an LLM can connect that to what it already knows about geography and restrictions. Without this bridge, your semantic data graph is just structured metadata. With it, AI becomes contextually aware.

You might wonder how this differs from an ontology.

A semantic data graph is one way to represent an ontology. But what matters is not the representation – it's the ability to relate your specific business knowledge to the semantic understanding embedded in LLMs. That link is what makes AI understand your business instead of just accessing your data.

Your semantic graph enhances your MDM. It does not replace it. Clean data is still the foundation. Semantics add the understanding layer that makes AI reasoning safe and consistent.

How semantic data graphs bring the guardrails your AI needs at scale

Your AI works fine with simple data. But when you add complexity, you’ll see gaps immediately.

Rules shift by context. A supplier that's fine in Europe may not be allowed in North America. What "product lifecycle state” means changes by region and business unit. For example, a product might be "active in Europe" but "discontinued in North America."

Without making these rules explicit, your AI breaks them. But semantic data graphs fix this by embedding rules with the data itself.

Instead of writing business rules in documentation or burying logic inside code, you put them in the semantic layer. Your AI doesn't have to guess. When it encounters supplier data, the restrictions are already there.

Let’s look at an example:

Your procurement team needs a system to find suppliers for a product. If you just give the system clean data and no rules, it optimizes for price. It finds the cheapest supplier, period. But your business knows that certain suppliers don't work in certain regions. Some need certifications. Others have contractual preferences that matter more than cost.

The system doesn't know any of that. So, it makes bad recommendations.

But when you add semantics, everything changes:

Now the supplier data includes region restrictions. Certifications are marked as required or optional. Preferred relationships are visible. The system can still optimize, and it's far less likely to break your actual business rules.

Why scale is such an issue here

When your AI comes across a product with 50 attributes – some regional, some variant-based, some hierarchical – it has to guess which ones matter for which decisions. Then multiply that across products, suppliers and customers.

The system runs into inconsistencies immediately. Without explicit meaning to anchor decisions, it starts guessing – and those guesses compound.

Semantic structure keeps it grounded. As complexity grows, your AI has access to the rules it needs to make better decisions. It's working from actual business logic instead of guessing. That's what reduces errors and keeps risk down.

AI stays predictable. And in the end, that’s what most enterprises really need.

How semantics let your AI act autonomously without you losing control

Most enterprises worry that autonomous AI means losing control. The reality is different. Semantic data graphs are how you keep control while letting AI act.

When your AI system has explicit business rules expressed in the data itself, it's far more likely to interpret your data correctly instead of misunderstanding it.

A data enrichment system that understands supplier restrictions can work independently. It won't break the rules because the rules aren't external constraints: They're part of what the system knows.

The same applies to:

- Procurement decisions

- Portfolio optimization

- Any workflow that currently needs human sign-off at every step

If your AI understands your business rules, it can make decisions faster without increasing risk.

This becomes urgent when AI moves beyond analysis into action

A system that answers questions can get away with loose understanding. But a system that makes changes needs to know what it's doing. It needs to understand which regions allow certain suppliers, which certifications are non-negotiable, which relationships matter strategically.

Semantics don't restrict what your AI can do, they expand it. You can hand off routine decisions to machines instead of having them lost in a complex set of data. Without semantics, your AI is just guessing what your rules might be.

How to translate your business knowledge into machine-readable meaning, with Stibo Systems

Your business already knows its rules. Supplier certifications, regional restrictions, preferred relationships, and so on. They're embedded in how you operate every day.

The problem is your AI doesn't have access to this knowledge. So, it invents its own logic.

Our MDM platform lets you make that knowledge visible to machines. You associate semantic metadata directly to your data model. When you label a supplier relationship "geographically restricted to Europe," the system captures that. When you mark a certification "required for medical devices," that's now machine-readable.

It is as simple as this:

- Add descriptions in natural language as semantic metadata to your attributes and data types – explain your rules the way you would to a colleague

- Our embedded MCP server delivers this semantic information to your AI systems

- No separate platform. No migration. You're layering understanding onto master data you already manage

Start with your most critical domains: products, customers and suppliers. Add semantic descriptions over time. Test with a pilot. Scale as confidence grows.

You’re making what your business already knows visible to the machine. And once your AI understands your rules, it’s finally ready to act.