Mar 9, 2026

•

7 minutes read

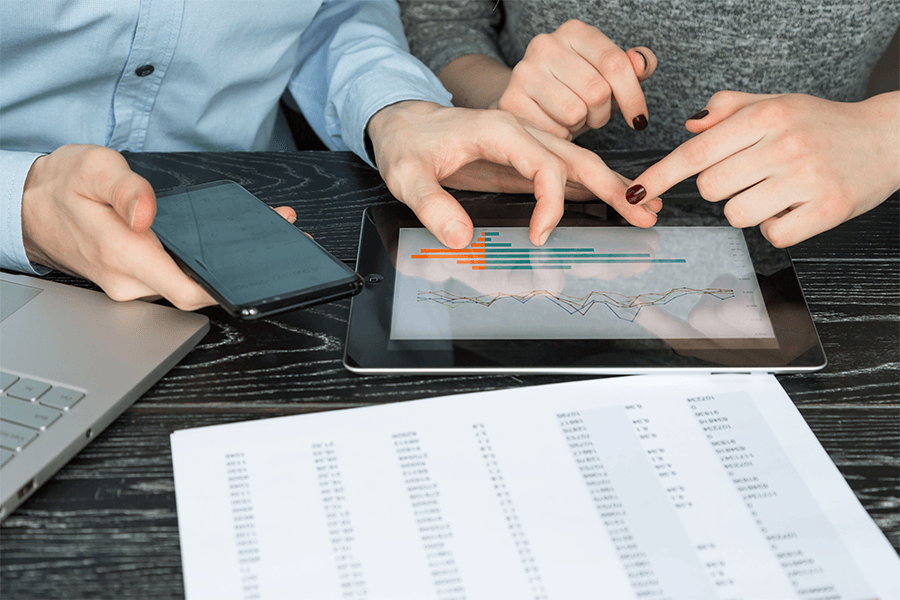

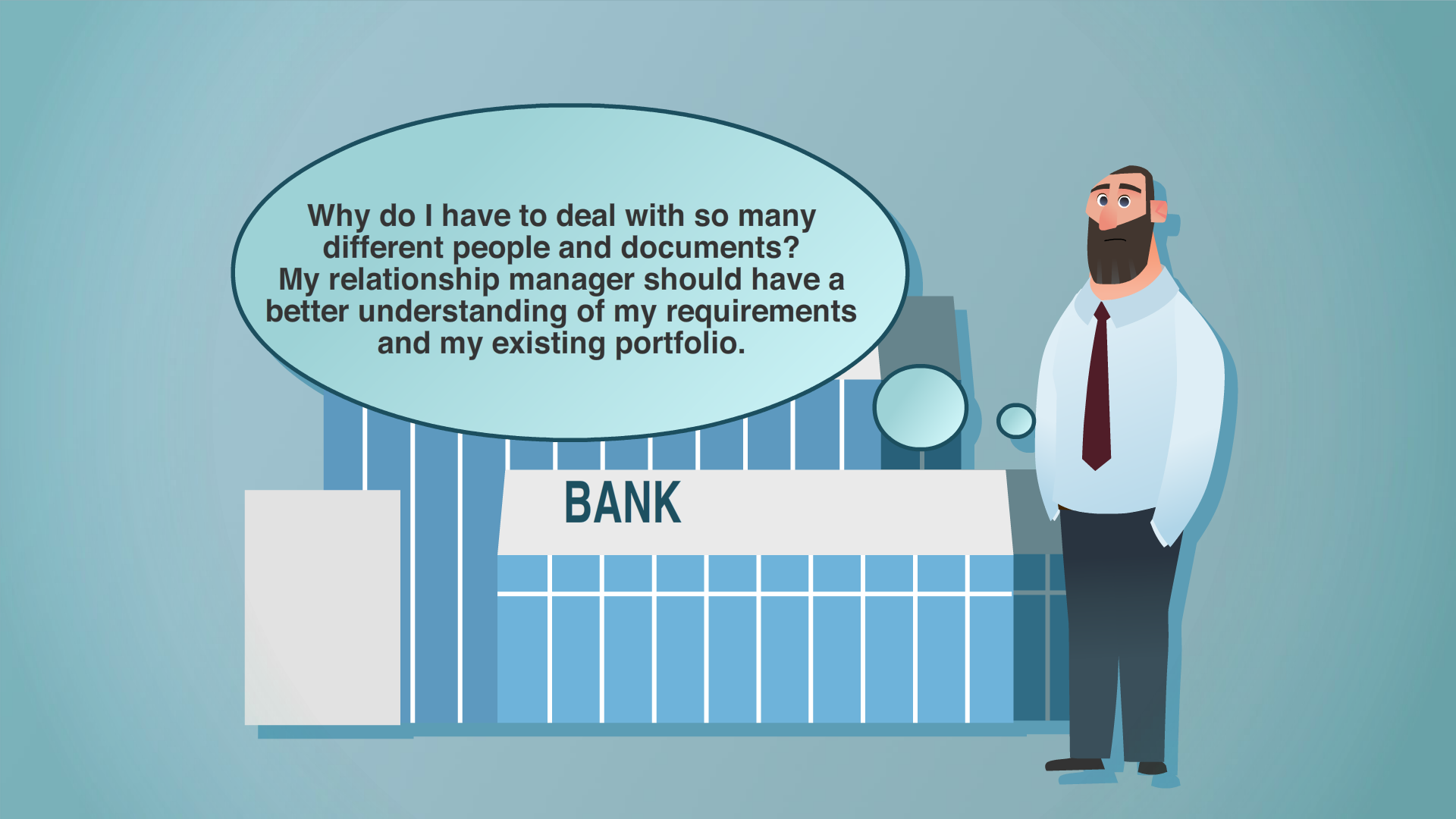

The trusted data foundation of intelligent enterprise Every AI model is only as intelligent as the data it learns from. Every analytical dashboard is only as trustworthy as the data it displays. Yet across industries, from retail and healthcare to financial services and manufacturing, organizations struggle with a fundamental challenge: Their master data exists in silos, riddled with inconsistencies that undermine even the most sophisticated AI and analytics initiatives....

Read the full story →

Discover the newest articles

Trending now

Olivier-Romain Jolly

Feb 24, 2026

•

6 minutes read

Stibo Systems

Feb 17, 2026

•

4 minutes read

Arnjah Dillard

Jan 27, 2026

•

8 minutes read